A/B Testing on Steroids: How AI Predicts Winners Before You Do

AI is transforming how we approach /B testing, making it faster and smarter than ever before, solving traditional multivariate testing challenges by intelligently predicting which combinations are most likely to succeed and prioritising those tests.

I break down the shift from Frequentist to Bayesian logic and why tools like Unbounce are changing the game.

A/B testing used to require technical setup, careful planning, and patience. Not anymore. AI is transforming how we approach testing, making it faster and smarter than ever before, solving traditional multivariate testing challenges by intelligently predicting which combinations are most likely to succeed and prioritising those tests.

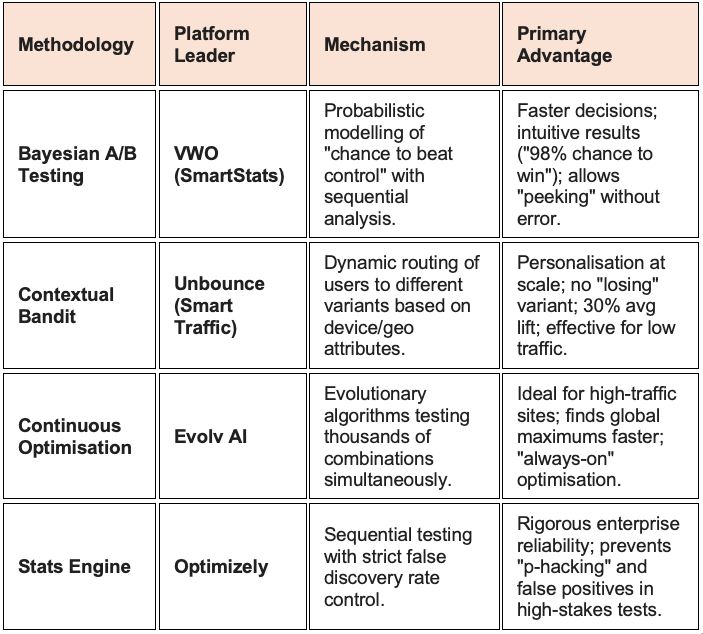

The philosophy of experimentation is shifting from the traditional "Frequentist" A/B test, which requires fixed sample sizes and lengthy durations, to faster, more adaptive methodologies like Bayesian Statistics and Multi-Armed Bandit (MAB) algorithms.

This shift is driven by the need for speed and the opportunity cost of sending traffic to losing variations.

Machine learning algorithms surface deeper insights from test data that may not be obvious through manual analysis. The technology continuously learns from incoming data, adjusting test parameters in real time. AI takes multivariate testing to the next level by automating intricate tasks like experiment setup and managing a wide range of variable combinations, enabling quicker, data-backed decisions.

Bayesian vs. Frequentist: The Speed Revolution

Traditional Frequentist testing (used by legacy tools) asks: "Is there a statistically significant difference between A and B?" This methodology is rigorous but slow; it requires waiting until a pre-determined sample size is reached, often leading to wasted traffic on poor performers and prohibiting "peeking" at results. Modern platforms like VWO (SmartStats) have adopted a Bayesian engine. This approach asks: "What is the probability that B is better than A?".

Benefits of Bayesian: It allows for "peeking" at results without invalidating the test validity. It provides more intuitive answers (e.g., "Variation B has a 95% chance of being the winner") and typically reaches a decision point faster than Frequentist methods, reducing the time-to-insight.

VWO SmartStats: Once you open VWO's Visual Editor and click on any piece of text, you'll find a 'Suggest Variations' option. Clicking on it will display AI-powered copy suggestions based on existing copy that you can choose from. VWO’s engine is specifically tuned to handle "sequential testing," allowing marketers to make decisions continuously as data comes in. It also includes "false discovery rate" controls to prevent the common error of calling a winner too early due to random noise, balancing the need for speed with statistical integrity.

Smart Traffic and Contextual Bandits

Unbounce has popularised a feature called Smart Traffic, which abandons the concept of a single "winner" entirely. Instead, it uses a Contextual Multi-Armed Bandit approach.

Routing vs. Winning: In a traditional A/B test, you eventually choose one variant for everyone. Smart Traffic keeps all variants active and uses AI to route specific visitors to the variant they are most likely to convert on.

Attribute Analysis: The AI analyses visitor attributes (device, location, browser, OS, time of day) and learns correlations (e.g., "iOS users in New York convert better on the minimalist design (Variant A), while Android users in London prefer the detailed long-form copy (Variant B)").

Benchmarks: This approach starts optimising after as few as 50 visits and can deliver an average conversion lift of 30% compared to a traditional A/B test. It excels in low-traffic scenarios where traditional testing would take months to reach significance. It essentially creates a personalised experience for every visitor segment without manual rule-setting.

Continuous Optimisation with Evolv AI

Evolv AI takes experimentation to the enterprise level with Continuous Optimisation. Unlike A/B testing, which compares two discrete pages, Evolv AI tests thousands of potential combinations of elements (headlines, images, button colours) simultaneously.

Active Learning: The system uses evolutionary algorithms to "breed" high-performing design elements. If a specific headline works well, it is combined with other winning elements in subsequent generations of the page. This mimics natural selection, constantly evolving the user experience to maximise the objective function (conversion rate).

Strategic Shift: This moves experimentation from a "project-based" activity (running a test for 2 weeks) to an "always-on" infrastructure where the website is permanently in a state of self-improvement, dynamically adapting to changes in user behaviour or market conditions.

Other key tools include Fibr.AI, designed for collaborative, AI-powered A/B testing, letting marketing teams quickly create, launch, and analyse experiments in real time. The platform has built-in chat-style collaboration, lets teams create multiple test variants using AI suggestions, and provides seamless integration with analytics tools for clear reporting and faster decision-making. Its no-code editor, predictive targeting, and continuous optimisation help teams find top-performing campaigns and boost conversions together, even at scale.

Companies expecting significant growth were more likely to have mature experimentation strategies and unified testing processes, with AI having played a bigger role in testing maturity in 2025, according to Kameleoon.